In October 2023, a meeting of a Boye & Co digital leaders community brought together about a dozen people to discuss topics of mutual interest. My role was to present on the topic of artificial intelligence.

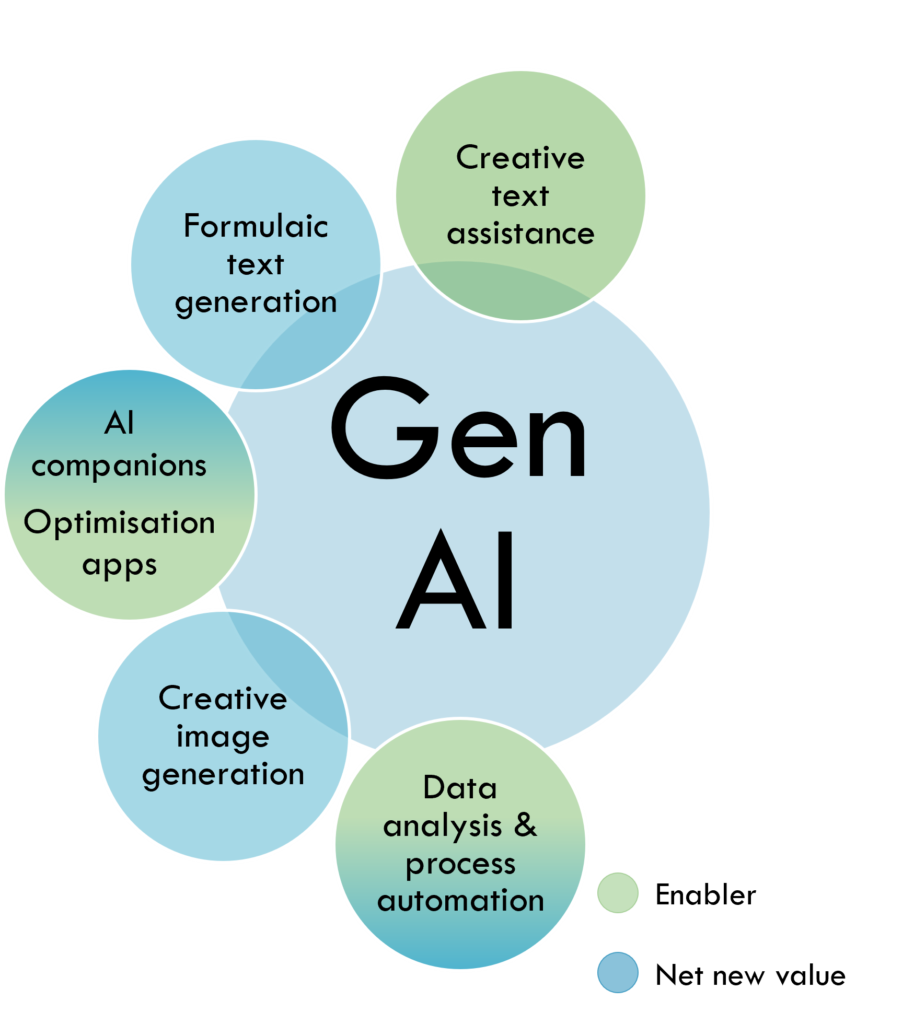

Because artificial Intelligence is an area that is so wide and so varied, We focused on the aspects that interested digital leaders. During the day, I made notes on what people were discussing and then put together a mind map of sorts to create a visual that would cover the topics of interest to the digital leaders in the room. Everyone seemed to somehow be interested in AI as it related to content: content generation, content production, content management, and that’s what was covered. This is what the starting point looked like.

Traditional AI

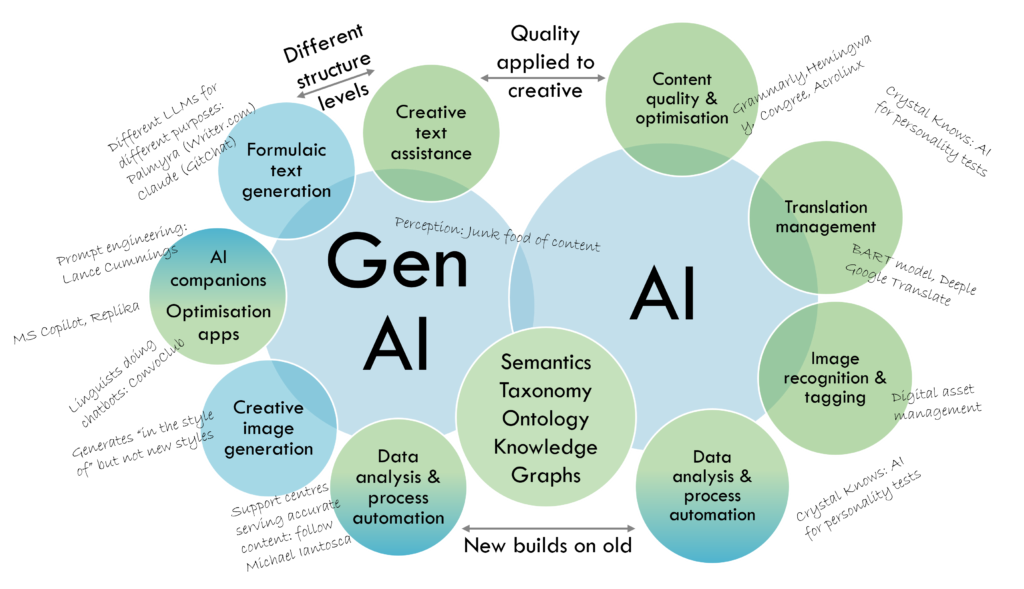

The first thing to note is that, in general, we seem to make a distinction between two eras of AI: traditional AI and generative AI. It may seem odd to call AI “traditional” but considering that AI has been around since the 1950s, there is a long and progressively sophisticated history of use of AI in tools meant to improve and/or automate content. Some common uses are in the following areas:

- Translation management. There’s been machine translation and translation memories since the 1950s, and neural machine translation has been around for at least a decade now. It’s quite a mature industry now. This year, all of the major platforms are marketing their platforms as incorporating Ai in one way or another.

- Content optimisation. We’ve had things like spelling and grammar checkers for a long time now. The first AI-enabled content optimisation application used by multinationals was launched in the early 2000s. The app added to the usual spelling and grammar checking with tone and voice checking, disambiguation of meaning, and sentiment analysis.

- Image recognition. I remember how exciting it was when my friend, a Software Engineering Program Director at my alma mater, showed me how image recognition worked and some of the projects that he was involved with at the university. Today, we use this research to recognise, recall, and manage digital assets. Image recognition began in the 1950s and AI-enabled auto-tagging really gained ground in the mid-2010s.

- Data analysis. Tool-enabled data analysis goes back for a long time, back to the 1950s, and become more sophisticated with the application of artificial intelligence. For example, academic papers discussing the advances in how medical data is analysed with the help of AI is mind-boggling. Robotic process automation (RPA) was coined in 2012, and combining RPA with AI moves data analysis to another level.

Generative AI

The year 2022 was the break-out year for generative AI. Despite being around since the 1960s – the first LLM was Eliza, which powered the world’s first chatbot, there was little innovation until the mid-2010s, when natural language processing was widely adopted. So what changed? ChatGPT and DALL-E 2 were given simple, easy-to-use interfaces that democratised access to them. Anyone with an interest could interact and see instant, tangible results. There have been some basic uses of GenAI that we could have anticipated, such as replacing writers in order to produce rather mundane long-form content. Equally, there have been some extremely creative uses that have led to net new value.

Given that AI doesn’t actually “create” but “generates” through reformulating and re-interpreting existing content. It took a while until the limitations were understood. Generative AI was widely touted as being the miracle solution to all content problems. The “tell the LLM a few vague sentences and you’ll get award-winning text-based content and images” phase was short-lived. The word salads, the hallucinations, the unrealistic body appendages, and the invented references dulled the shine on the new toy. However, as the technical folks and the tech-inclined content people experimented and the dust began to settle, there was some clarity around what worked, what didn’t, and how you could improve the results produced.

- Formulaic content. This type of content was generated even before AI came on the scene, but generative takes it up a notch. Reporting of seismic activity (an earthquake of [magnitude] on the Richter scale was reported at [hh:mm] on [date] at [locale]…), summarising sports results, or any other content that follows recognisable patterns can be reliably generated and is a prime application of generative AI.

- Creative content. Generative AI is not great at producing long-form content that needs some sort of analysis or depth. Analysis and creativity is still in the power of the human brain. However, the creative uses are good as enablers, like having a personal assistant but one that never tires, sits by your side, and ruthlessly edits your text. It can be a muse, generating (mostly) relevant points that can be structured and fleshed out into useful content.

- Optimisation apps and AI companions. I’ve grouped them together because there is some sort of ongoing interaction, despite those interactions being vastly different. Not all LLMs are created equal, and some have been developed with particular functions in mind. AI companions could mean apps such as Microsoft Copilot, which acts as an assistant to content developers to optimise content quality – what used to be called an “assistant” – as well as assistance with drafting of emails and texts – a sort of predictive completion. This could include voice assistants such as Amazon’s Alexa or Apple’s Siri. Another type of AI companion, using a very different type of LLM, carries on conversations as a “synthetic friend”. These companions can chat, play games, roleplay, or even give advice.

- Image generation. Between last year and this year, the quality of the generated images has gotten exponentially better. Previously, a generated image of a person might yield someone with three hands or one hand with twelve fingers. Today, you’ll get a much more realistic look. Of course, the output is still generated from existing styles, whether that’s steampunk to Rembrandt to Hockney – anything that the LLM was trained on.

- Data analysis. AI already allows large volumes of complex data to be speedily crunched. What Generative AI brings to the table is the ability to generate more and varied insights. The LLM’s understanding of language and patterns can produce results that could take ages for a person to realise. As well, generative AI contributes to predictive analytics – academic papers about cancer predictions through data analysis explain the importance of these developments – that have far-reaching implications. New aspects of process automation are also available. Rote tasks could be automated before generative AI; the ability to easily articulate more sophisticated tasks expands its repertoire. A simple example is the generation of Excel formulas and VBA code.

By the time we finished our discussion, the graphic looked something like this (but messier).

Discussing the universal issues

The other discussion points that came up had to do with universal aspects of AI across the board. These are not related to function or efficacy, but to social implications arising from the rapid adoption of artificial intelligence, particularly now that generative AI can be used by a wide range of people, many of whom have little or no training in setting appropriate parameters when generating content. These eight discussion points are not a comprehensive list but a good starting point for thoughts during your own pursuits using generative AI.

- Information integrity. When newspapers were queried about articles referenced by ChatGPT which turned out to be non-existent articles, this known problem became a hot topic. What are the sources being used to train the models? How accurate are the sources? Are they traceable? Can citations be verified for authenticity?

ChatGPT is making up fake Guardian articles. Here’s how we’re responding – The Guardian

Large Language Models Don’t “Hallucinate” – Better Programming

Different ways of training LLMs – Towards Data Science - Ethics. There is little to no transparency about what data is collected and how it is being used. Not only is data gathering exploding, so is data brokering. The uproar about the change in Zoom policies is just the tip of the iceberg. What privacy measures are in place? Can they be identified if data from multiple sources is concatenated? How good is the data quality? How transparent is the data gathering? Is it being brokered to reputable entities? Is there an ethicist overseeing data collection?

Artificial Intelligence: examples of ethical dilemmas – UNESCO

AI Ethics And AI Law Clarifying What In Fact Is Trustworthy AI – Forbes

Great promise but potential for peril – Harvard Gazette - Bias. Because the models are being trained on historical information, inherent cognitive bias creeps in, getting replicated in the LLMs. Are people being denied social, financial, employment, and other services because of bias? Is software for government, law enforcement, and social welfare being examined for bias? How likely are people to be misidentified by, for example, facial recognition? Are population segments most affected by biases involved in the testing of bias?

Generative AI models are encoding biases and negative stereotypes in their users – Science Daily

Eliminating bias in AI may be impossible – a computer scientist explains how to tame it instead – The Conversation

Ageism, sexism, classism and more: 7 examples of bias in AI-generated images – The Conversation - Model quality. As models are trained on information that includes some generated by the LLM itself, we can see temporal degradation. In addition, some agent provocateurs have undertaken model or data poisoning to thwart training of models using their intellectual property in an attempt to protect creator copyright. How do you know whether the model you use as a consumer has degraded? Are you being informed about any data poisoning that may skew your reliance on generative AI for data?

Why Machine Learning Models Degrade in Production – Towards Data Science

91% of ML Models degrade in time – NannyML

Emerging cyber-security threats in 2023 from AI to quantum to data poisoning – CSO

This new data poisoning tool lets artists fight back against generative AI – MIT Technology Review - Social. Social implications is a vast topic on its own. We’ve seen the application of AI in countries such as China (overt) and the USA (covert), creating a surveillance society. Since the time that Cambridge Analytica manipulated first voters through micro-targeting, there has been an explosion of the use of AI to influence election outcomes. How can we protect ourselves and the vulnerable from being exploited? Where are the checks and balances that preserve our social structures?

The Global Expansion of AI Surveillance – Carnegie Endowment for International Peace

AI Cameras Took Over One Small American Town and Now They’re Everywhere – 404 Media Podcast

The AI-Surveillance System Symbiosis in China – Center for Strategic and International Studies

Artificial Intelligence Has the Power to Destroy or Save Democracy – Council on Foreign Relations

- Sustainability. The carbon footprint of AI is substantial. Generative AI takes massive amounts of computing power, which is driving both the expansion of data centres and the energy consumption to keep the servers cooled. From the extraction of raw materials to with the production of the supercomputers, the construction and powering of the data centres, and the ongoing costs from training to models to customer usage, there is a large environmental cost, at a time when industry is pushing the envelope of how much the earth can take before reaching a critical tipping point. When will standards for measuring AI’s carbon footprint emerge? How can we measure our own carbon footprint when using generative AI? What is the real cost of generating those dozens of images for one-time use on social media?

We’re getting a better idea of AI’s Carbon Footprint – MIT Technology Review

The Carbon Footprint of Artificial Intelligence – Communications of the ACM

A Computer Scientist Breaks Down Generative AI’s Hefty Carbon Footprint – Scientific American

AI’s Growing Carbon Footprint – Columbia Climate School

Balancing positive outcomes with cautions

AI can have negative outcomes, as we have already seen in dozens of high-profile scandals. AI can be a tool for bad actors to spread misinformation, but misinformation can also be spread “without malice” – when a model answers a question with a best guess which, in turn, gets lots of engagement, which leads existing reporting structures (that base accuracy on engagement levels) to boost the inaccurate information.

There is an obvious lack of moral judgement of an LLM, which can create some dubious results (see ethics, social, and bias, above). People who barely understand technology, let alone AI, are now targets. Scammers are looking to AI for new ways to separate marks from their money. Deepfakes are being used to manipulate, humiliate, or titillate. Voice manipulation has been used for everything from monetising actors’ voices without compensation to investment fraud to attempting to subvert troops during war.

Artificial intimacy is another aspect that can lead to misinformation. It ranges from the relatively benign “I’m sorry, I’ll change my answer to agree with you” to becoming complicit in the downward spiral during a mental health crisis.

We owe it to ourselves to ask questions such as: How will we protect the marginalised and vulnerable members of society? How are regulations being updated to factor in developments in AI? Can regulations be enforced, and would the impetus be there to enforce them? How can regulations be enforced if detection is not possible? How can we protect ourselves and be protected from the increasingly sophisticated emotional manipulation seeking to influence our decisions?

Deepfake Technology: Assessing Security Risk – American University

Can AI therapy solve the mental health crisis? – New Statesman Podcast

‘It could have me read porn’: Stephen Fry shocked by AI cloning of his voice in documentary – The Guardian

The Dark Side of Generative AI: Automating Inequality by Design – California Management Review, Berkeley University

Let’s end of a hopeful note. There are many positive outcomes from use of generative AI. It would not be fair to discuss so many cautions without covering some of the ways that AI is being used for good. AI-powered robots are teaching English to preschoolers. Advanced data analysis is leapfrogging medical findings. Work productivity and opportunities for net new value abound. Using AI when monitoring, say, network traffic, can detect and deflect threats. The ability of AI to connect events that could be overlooked by humans can help us make better decisions. AI has the potential to find errors in formulas and solve complex problems, whether averting danger to patients, business forecasting, or modelling climate change.

That said, while AI has the potential to help with the analysis and implementation, the impetus to do so still lies with humans. There may always be bad actors, but there are always good actors, as well, who bring ideas to the table along with the ethics to ensure that as the tides rise, we are raising all boats, not just the yachts.

Can Artificial Intelligence Help See Caner in New, and Better, Ways? – National Cancer Institute

Generative AI could transform work; boosting productivity and democratising innovation – Oxford Martin School

How can AI support diversity, equity and inclusion? – World Economic Forum

Note that links to articles were all verified 2023-11-05. Many other sources were consulted before and during the creation of this article, and only a few chosen in the interest of not overwhelming readers with information.